If you're building APIs with FastAPI, Flask, or Django, you’ve probably heard:

“Async is faster.” “Use async for better performance.” “Sync is outdated.”

But is that actually true?

The reality is more nuanced.

In Python APIs, async vs sync does not automatically mean faster vs slower. The difference depends entirely on what your application is doing - CPU-bound work, I/O-bound operations, database queries, external APIs, or heavy computations.

This article explains:

- 🧠 What synchronous and asynchronous code actually mean

- 🚀 When async improves performance

- ❌ When async does absolutely nothing

- ⚠️ Common misconceptions

- 🏗️ Real-world backend scenarios

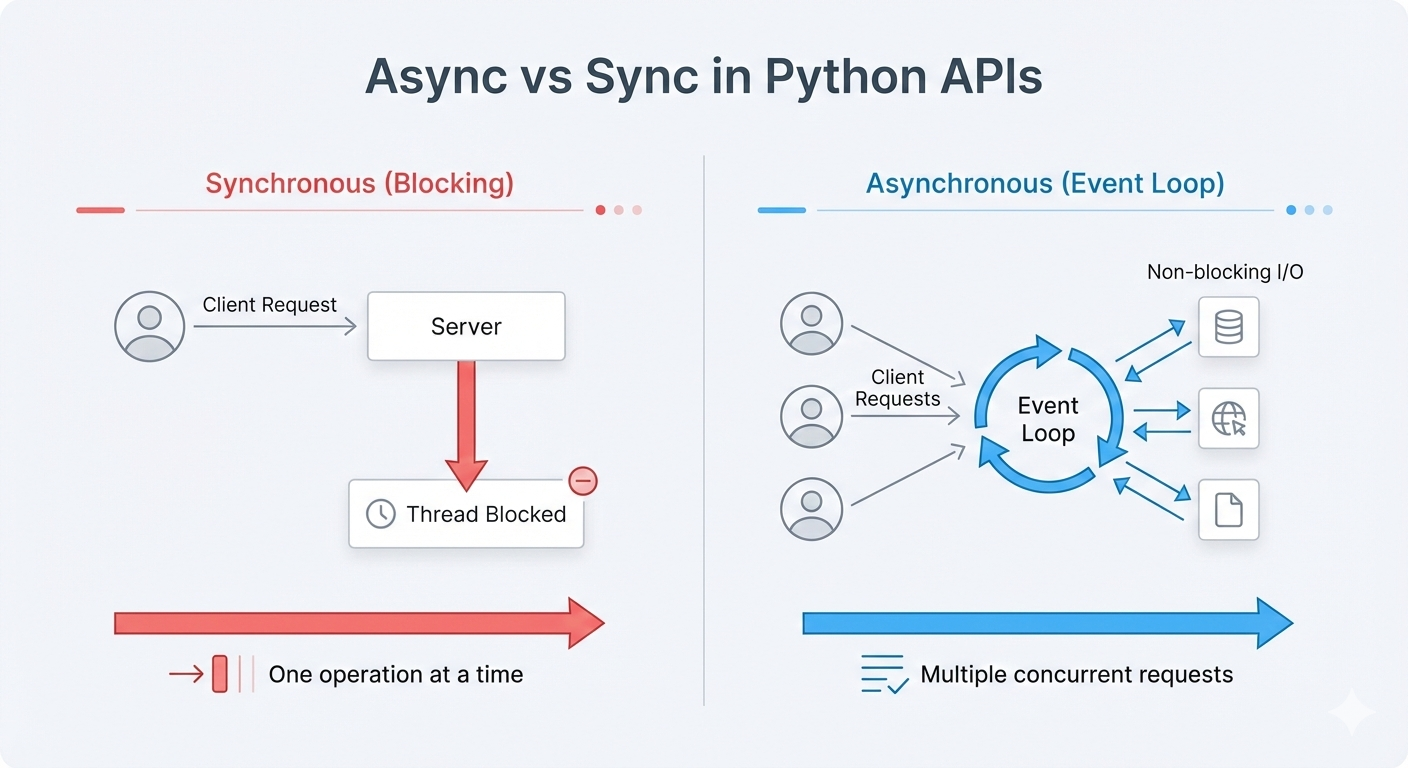

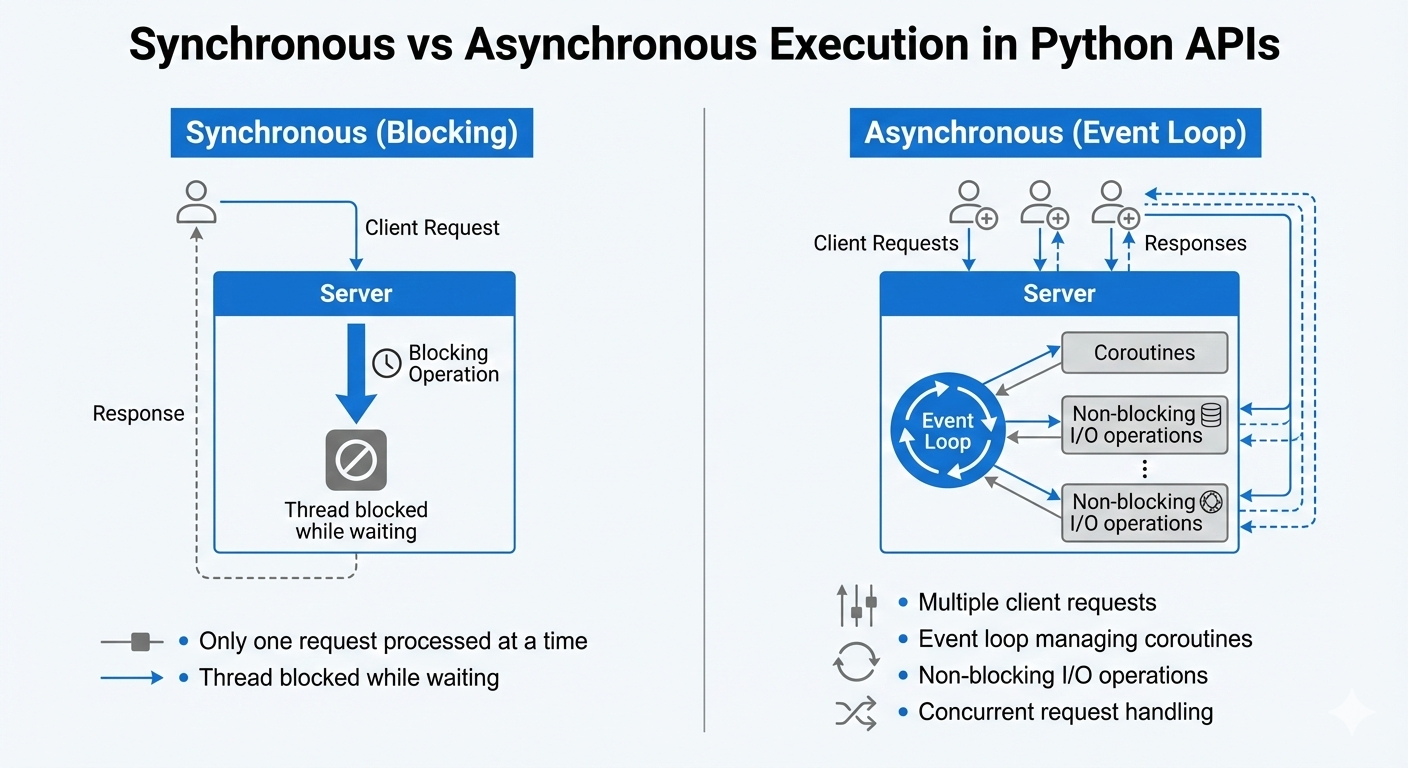

🔄 What Is Synchronous (Sync) Execution?

Synchronous code executes line by line, blocking until each task completes.

Example:

def get_data():

result = requests.get("https://api.example.com/data")

return result.json()Here:

- ⛔ The function waits until the HTTP request finishes.

- 🔒 The thread is blocked.

- 🧍 No other request is handled by that worker during the wait.

In WSGI-based frameworks like Flask (default setup), this is the typical behavior.

📌 Characteristics of Sync APIs

- 🧵 One request handled per worker thread/process

- ⏳ Blocking operations stop execution

- 🧩 Simpler mental model

- 🛠️ Easier debugging

🔁 What Is Asynchronous (Async) Execution?

Asynchronous code allows the program to pause while waiting for I/O, and continue handling other tasks.

Example in FastAPI:

async def get_data():

result = await httpx.get("https://api.example.com/data")

return result.json()Here:

- 🔄 The function yields control while waiting.

- 🔁 The event loop handles other requests.

- ⚡ One worker can manage many concurrent waiting operations.

Async is powered by:

- 🔁 Event loop

- 🧩 Coroutines

- ⏳ Awaitable objects

- 🚀 ASGI servers like Uvicorn

🎯 The Critical Question: When Does Async Actually Matter?

It depends entirely on workload type.

🚀 Scenario 1: I/O-Bound Work (Async Helps)

If your API:

- 🌐 Calls external APIs

- 🗄️ Reads from a database

- ⚡ Accesses Redis

- 📡 Makes HTTP requests

- 📁 Reads files from disk

- 🌍 Waits on network responses

Then async can significantly improve concurrency.

💡 Why?

During I/O wait time, the event loop can:

- 🔄 Switch to another request

- ⚡ Avoid idle CPU cycles

- 📈 Increase throughput without adding threads

Example use case:

- 🤖 Chatbot backend calling LLM API

- 🔗 Microservice fetching multiple services

- 💳 Payment gateway verification

Async improves scalability here.

❌ Scenario 2: CPU-Bound Work (Async Does NOT Help)

If your API:

- 🧠 Runs heavy ML inference

- 🖼️ Performs image processing

- 🔐 Does encryption/decryption

- 📊 Processes large datasets

- 🧮 Executes complex calculations

Async will NOT make it faster.

🧱 Why?

CPU-bound tasks do not release the event loop.

Python still runs on the GIL (Global Interpreter Lock).

Python still runs on the GIL (Global Interpreter Lock).

In this case, you need:

- 🧵 Multiprocessing

- 🏗️ Background workers (Celery, RQ)

- 📬 Task queues

- 🖥️ Separate compute services

Async does not solve CPU bottlenecks.

⚖️ Scenario 3: Simple CRUD API (Async Barely Matters)

If your API:

- 📦 Does basic database reads

- 👥 Has low traffic

- 🔒 Is internal-only

- 📉 Handles moderate load

You may not see measurable difference.

Async can even introduce:

- 🧠 Additional complexity

- 🐛 Harder debugging

- 🔀 Mixed sync/async confusion

For many projects, sync is perfectly fine.

🆚 FastAPI vs Flask: Does Async Make FastAPI Faster?

FastAPI is built on ASGI and supports async natively.

Flask is traditionally WSGI (sync).

Flask is traditionally WSGI (sync).

But here’s the truth:

- ❌ FastAPI is not faster just because it’s async.

- ✅ It’s faster due to Starlette + Uvicorn + efficient request handling.

If your FastAPI code uses blocking calls (like

requests instead of httpx), you lose async benefits.Async only helps when:

- 🧵 The entire stack supports async

- 🗄️ Database drivers are async

- 🌐 HTTP clients are async

- ⛔ No blocking calls exist

⚠️ Common Misconceptions

❌ Myth 1: Async Makes Code Faster

It improves concurrency, not CPU speed.

❌ Myth 2: Async Is Always Better

Only for I/O-heavy workloads.

❌ Myth 3: Sync Cannot Scale

With enough workers, sync scales well.

🧭 Real-World Decision Framework

Ask yourself:

1️⃣ Is my API heavily I/O-bound?

If yes → async makes sense.

2️⃣ Am I calling multiple external services?

If yes → async reduces latency stacking.

3️⃣ Am I doing CPU-heavy computation?

If yes → use multiprocessing instead.

4️⃣ Do I need WebSockets or streaming?

If yes → async (ASGI) is better.

5️⃣ Is my team comfortable with async patterns?

If no → sync may be safer.

📊 Performance Reality (Production View)

🚀 Async:

- Handles high concurrency better

- Reduces thread overhead

- Supports streaming & WebSockets

- Efficient under I/O-heavy load

🧱 Sync:

- Simpler architecture

- Predictable behavior

- Easier debugging

- Often sufficient for many SaaS backends

📌 When Async Actually Matters Most in 2026

- 🤖 Real-time AI applications

- 🎧 Streaming APIs

- 🧠 LLM-based backends

- 🌍 High-concurrency microservices

- 🔄 WebSocket applications

- 📡 Long-polling services

🏁 Final Verdict

Async vs Sync is not about “modern vs old.”

It’s about:

⚖️ I/O-bound vs CPU-bound workload.

Use async when:

- ⏳ Waiting dominates execution time.

Use sync when:

- 🧠 CPU work dominates execution time.

- 🧩 Simplicity is more valuable than concurrency.

The smartest backend engineers don’t ask:

“Should I use async?”

They ask:

🎯 “Is my workload I/O-bound?”

Answer that, and the decision becomes obvious.